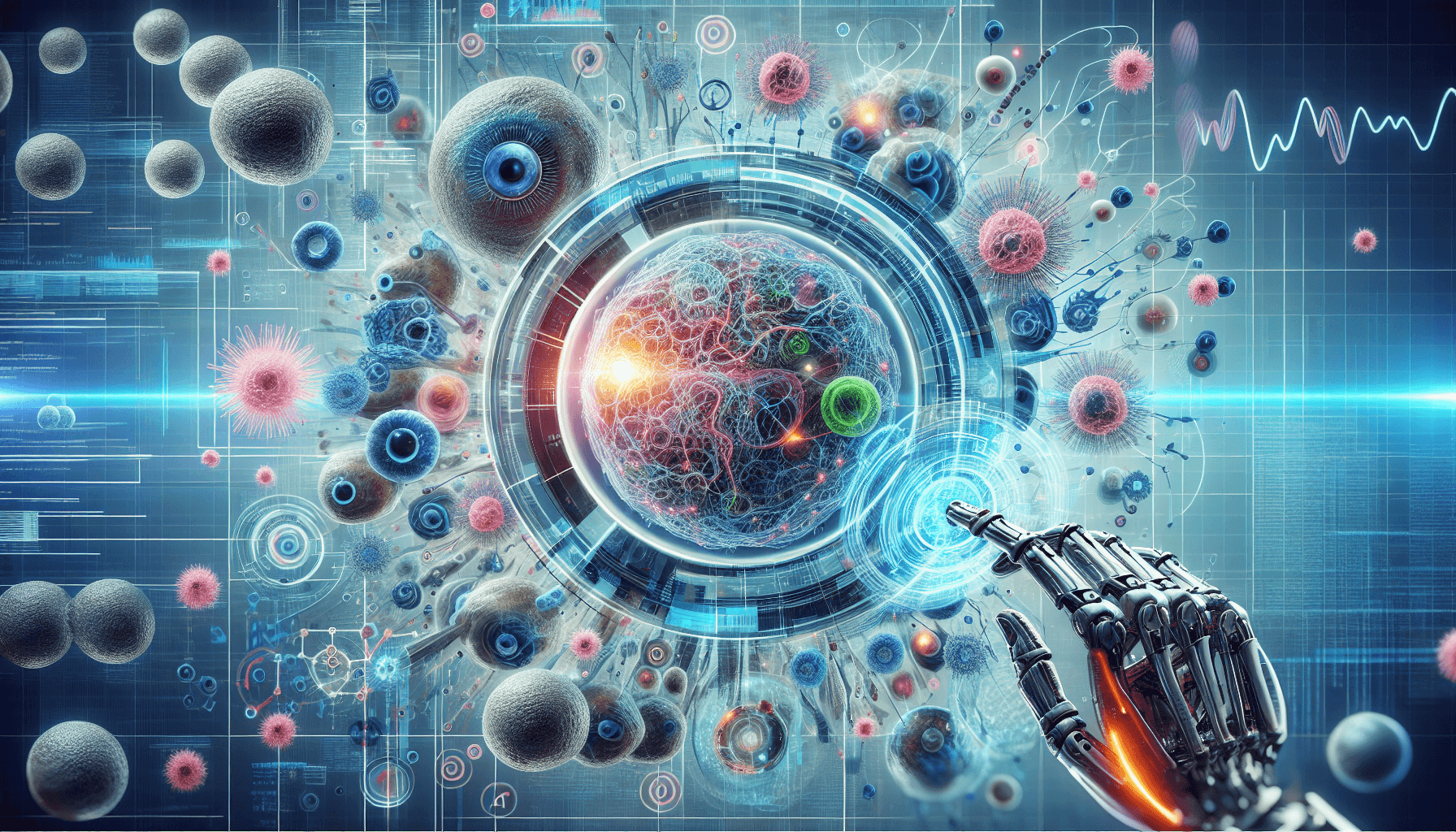

Researchers at Caltech have developed CellSAM, the first artificial intelligence system capable of automatically identifying and segmenting cells across diverse biological contexts using a single unified model. The breakthrough technology can analyze everything from cancer biopsies to immune cell behavior without requiring separate models for different cell types or imaging methods. Published in Nature Methods and made freely available to researchers worldwide, CellSAM addresses a fundamental bottleneck that has limited the pace of biological discovery for decades.

Cell segmentation—the process of precisely identifying individual cells within complex biological images—has long been one of the most time-consuming and error-prone aspects of biological research. Previously, scientists needed specialized models for each cell type and imaging technique, creating a fragmented landscape of tools that slowed research and limited cross-disciplinary collaboration. CellSAM's universal approach promises to accelerate discoveries across cancer research, immunology, developmental biology, and drug development by providing researchers with a single, powerful tool for cellular analysis.

Breaking Down Biological Barriers

The development of CellSAM tackles a fundamental challenge that has plagued biological research since the advent of digital microscopy. Traditional cell segmentation tools require extensive training on specific cell types and imaging modalities, meaning a model trained to identify neurons cannot effectively recognize cancer cells, and a system designed for fluorescence microscopy fails with brightfield imaging. This fragmentation has forced research teams to either develop expertise across multiple specialized tools or collaborate extensively just to analyze different aspects of the same biological system.

CellSAM's architecture builds upon the success of Meta's Segment Anything Model (SAM) for general image segmentation, but incorporates biological domain knowledge and training data spanning multiple cell types, tissues, and imaging techniques. The model can seamlessly transition from analyzing the intricate branching patterns of neural dendrites to identifying the irregular boundaries of metastatic cancer cells, all while maintaining high accuracy across different microscopy methods including fluorescence, phase contrast, and electron microscopy.

Transforming Cancer Research and Diagnostics

The implications for cancer research are particularly significant, as CellSAM can automatically identify and analyze cancer cells within biopsy samples with unprecedented consistency and speed. Traditional pathological analysis relies heavily on manual examination by trained specialists, a process that is not only time-intensive but also subject to inter-observer variability. CellSAM's ability to precisely segment cancer cells while distinguishing them from surrounding healthy tissue could standardize diagnostic processes and enable more quantitative approaches to cancer staging and treatment planning.

Beyond basic identification, the system can track morphological changes in cancer cells over time, potentially revealing insights into tumor progression, treatment response, and drug resistance mechanisms. This capability is especially valuable for immunotherapy research, where understanding the spatial relationships between cancer cells and infiltrating immune cells is crucial for predicting treatment outcomes. Researchers can now analyze these complex interactions automatically across large datasets, potentially identifying new biomarkers for treatment selection.

Accelerating Immunology and Drug Discovery

In immunology research, CellSAM's ability to simultaneously track multiple cell types opens new possibilities for understanding immune system dynamics. The model can follow individual T cells, B cells, and antigen-presenting cells within the same tissue sample, automatically quantifying their interactions and movements over time. This comprehensive analysis was previously impossible without combining multiple specialized tools and extensive manual validation, often taking weeks of work that CellSAM can complete in hours.

The pharmaceutical industry stands to benefit significantly from CellSAM's capabilities in drug screening and development. The technology can automatically assess how potential therapeutic compounds affect cell viability, morphology, and behavior across different cell lines simultaneously. This standardized approach to cellular analysis could reduce variability between studies, improve reproducibility of results, and accelerate the identification of promising drug candidates. Early adopters in the pharmaceutical industry are already integrating CellSAM into their high-throughput screening pipelines.

Open Science and Global Impact

Caltech's decision to make CellSAM freely available to researchers worldwide reflects a growing trend toward open science in AI development, but also addresses practical concerns about research equity. Advanced cell segmentation tools have historically been expensive and required significant computational resources, creating barriers for researchers at smaller institutions or in developing countries. By providing CellSAM as an open-source tool with relatively modest computational requirements, the technology democratizes access to state-of-the-art cellular analysis capabilities.

The open-source approach also enables rapid community-driven improvements and adaptations. Researchers can fine-tune CellSAM for their specific applications, contribute training data for underrepresented cell types or imaging conditions, and develop specialized extensions for emerging techniques. This collaborative model is expected to accelerate the tool's evolution and ensure it keeps pace with advances in microscopy technology and biological understanding.

This represents a paradigm shift from specialized, narrow AI tools to truly generalizable systems that can adapt to the full diversity of biological imaging.

Future Implications and Research Directions

The success of CellSAM represents a broader shift in biological AI from narrow, specialized applications toward more generalizable systems that can adapt to diverse research contexts. This trend parallels developments in other AI domains, where foundation models trained on diverse datasets demonstrate superior performance and flexibility compared to task-specific alternatives. Future versions of CellSAM are expected to incorporate additional biological knowledge, including molecular markers and temporal dynamics, potentially enabling even more sophisticated analyses of cellular behavior.

Looking ahead, the integration of CellSAM with emerging technologies like spatial transcriptomics and proteomics could create comprehensive platforms for multi-modal biological analysis. Researchers envision systems that can simultaneously track cell morphology, gene expression, and protein localization within the same tissue samples, providing unprecedented insights into biological processes. Such integrated approaches could revolutionize our understanding of development, disease, and aging at the cellular level, ultimately leading to more effective therapeutic interventions.

Sources

- https://www.youtube.com/watch?v=vkNyDkr6ico

- https://www.crescendo.ai/news/latest-ai-news-and-updates

- https://machinelearningmastery.com/5-breakthrough-machine-learning-research-papers-already-in-2025/

- https://today.ucsd.edu/story/nine-breakthroughs-made-possible-by-ai

- https://ai.google/research/

- https://news.mit.edu/topic/machine-learning

- https://arxiv.org/list/stat.ML/recent

- https://llm-stats.com/ai-news

- https://benchlm.ai

- https://epoch.ai/benchmarks

- https://pricepertoken.com/news/model-releases

- https://lmcouncil.ai/benchmarks

- https://www.vellum.ai/llm-leaderboard

Leave a Comment